The Developer’s Guide to Responsible AI

AI can help developers move faster, but speed without understanding creates fragile systems, security risks, and technical debt. Responsible AI use is not about avoiding AI. It is about using it without surrendering your judgment, ethics, or engineering standards.

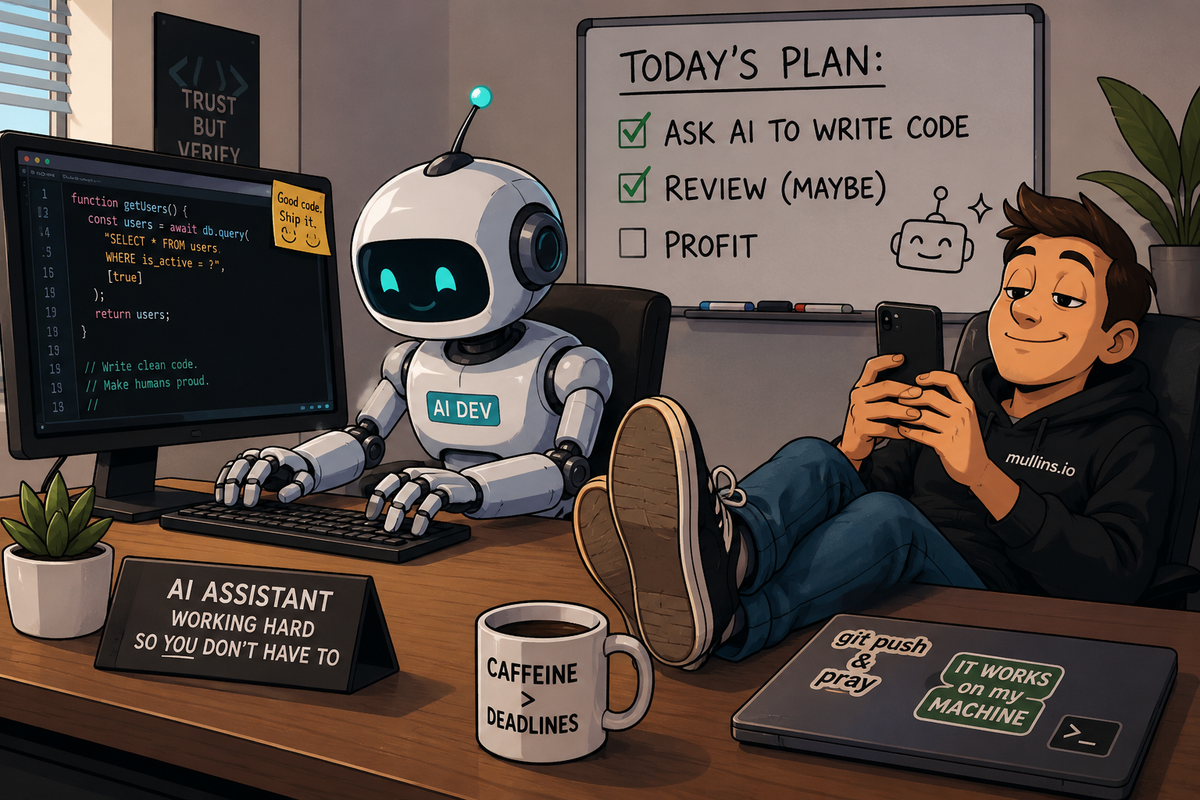

AI Is a Tool. Stop Treating It Like a Replacement for Thinking.

Everybody wants the shortcut.

That is the real story behind the AI boom.

Not innovation.

Not productivity.

Not transformation.

Shortcuts.

People want faster code. Faster content. Faster debugging. Faster delivery. Faster promotions. Faster startups. Faster everything.

And honestly? I get it.

Software development has always been a pressure cooker. Deadlines pile up. Requirements change halfway through implementation. Leadership wants estimates you cannot realistically provide. Production incidents appear at 2:13 AM because somebody somewhere decided Friday at 4 PM was the perfect time for a deployment.

Now generative AI shows up, and suddenly developers can scaffold APIs in seconds, generate tests instantly, summarize logs, write SQL queries, build documentation, and explain obscure frameworks without opening fifteen Stack Overflow tabs.

Of course, the industry exploded.

The tools are genuinely useful.

That part is true.

But somewhere along the way, a dangerous mindset began to creep into engineering culture.

People stopped treating AI like a tool.

They started treating it like a substitute for understanding.

That is where things start going sideways.

Because AI can absolutely help you write software.

It can also help you build an unmaintainable dumpster fire faster than ever before.

And the scary part?

Some developers cannot tell the difference anymore.

AI Is the New Calculator Problem

When calculators became common in schools, people panicked.

"Kids will never learn math."

Then Google arrived.

"People will never remember anything anymore."

Then Stack Overflow exploded.

"Developers are just copying code they do not understand."

Now that AI has entered the room, we are hearing the same argument again.

Some of those concerns are exaggerated.

Some are absolutely valid.

Here is the reality:

Good developers use AI the same way good developers use Google.

As leverage.

Not as a replacement for judgment.

The problem is not using AI.

The problem is surrendering your brain to it.

There is a massive difference between:

“I used AI to help brainstorm an architecture pattern.”

…and

“I pasted code into production because the robot sounded confident.”

Confidence is cheap.

LLMs are extremely good at sounding correct.

That does not mean they are correct.

Sometimes they are brilliant.

Sometimes they hallucinate entire libraries.

Sometimes they introduce security vulnerabilities that would cause a junior engineer to fail an internship review.

And sometimes they produce code that technically works while quietly setting your future team on fire.

That last one is the dangerous category.

Because broken code gets caught.

Fragile systems often survive just long enough to become everybody else’s problem.

"Vibe Coding" Is Not Engineering

There is a trend floating around the industry right now where people brag about barely touching code anymore.

They prompt.

They regenerate.

They copy.

They paste.

They call it "vibe coding," as if it were some revolutionary evolution in software development.

Listen.

Are you experimenting with side projects? Fine.

If you are prototyping? Cool.

If you are learning? Totally reasonable.

But if you are building production systems without understanding the code being generated, you are not engineering.

You are gambling.

And eventually the house wins.

The problem with blindly trusting AI-generated code is not just technical debt.

It is intellectual debt.

You slowly stop developing problem-solving skills.

You stop learning why systems behave the way they do.

You stop understanding tradeoffs.

You stop recognizing bad patterns.

Then one day, production breaks.

The AI output no longer works.

And suddenly you are standing in the middle of a burning system trying to debug logic you never actually understood.

That is not an AI problem.

That is a craftsmanship problem.

Working Code Does Not Mean Good Code

One of the biggest misconceptions in software engineering is the idea that if code works, it must be acceptable.

No.

Absolutely not.

A surprising amount of terrible software technically works.

Until scale happens.

Until traffic increases.

Until security reviews begin.

Until edge cases appear.

Until another developer has to maintain it.

AI is very good at producing code that appears functional.

That does not mean it is secure.

That does not mean it is maintainable.

That does not mean it is readable.

That does not mean it aligns with your architecture.

And it definitely does not mean it fits your organization’s operational standards.

A lot of AI-generated code feels like somebody speedran Stack Overflow from 2017.

Because in many ways, that is exactly what happened.

The model learned from billions of lines of public code.

Some excellent.

Some terrible.

Some outdated.

Some are outright dangerous.

So if you ask an AI to quickly generate authentication logic, there is a very real chance it gives you something vulnerable, outdated, or architecturally messy.

And if you do not know enough to recognize the problem?

Congratulations.

You just merged technical debt with confidence.

Treat AI Like a Junior Developer

This is probably the healthiest mental model.

AI is an extremely fast junior developer.

Sometimes brilliant.

Sometimes concerning.

Always requiring review.

You would never take a junior engineer’s pull request and immediately deploy it to production without inspection.

So why are people doing exactly that with AI?

A responsible engineer reviews AI-generated code the same way they review human-generated code.

Actually, more aggressively.

Because AI does not truly understand your business context.

It does not take your operational constraints into account.

It does not understand your customers.

It does not understand regulatory requirements.

It predicts.

That is fundamentally different.

The strongest developers I know use AI constantly.

But they use it intentionally.

They ask questions.

They compare approaches.

They validate assumptions.

They challenge outputs.

They use AI to accelerate thinking.

Not to replace it.

Responsible AI Starts With Accountability

There is one rule that matters more than all the others.

You own what you ship.

Not the AI.

Not the prompt.

Not the tooling.

You.

If insecure code reaches production because you copied AI output without understanding it, that is still your responsibility.

If customer data leaks because somebody pasted proprietary information into a public model, that is still your responsibility.

If your AI-generated feature discriminates against users because nobody tested edge cases, that is still your responsibility.

The industry needs to stop acting like AI somehow changes accountability.

It does not.

The keyboard changed.

Responsibility did not.

Bias Is Not Just a Social Problem

When people hear the word "bias" in AI conversations, they often immediately think about politics.

But bias in software systems is broader than that.

Bias appears anywhere systems unfairly favor one outcome over another.

And AI absolutely inherits bias from training data.

Because humans created the data.

Humans are messy.

So the models become messy too.

This matters far beyond social media arguments.

It matters in hiring systems.

Loan approvals.

Healthcare recommendations.

Insurance calculations.

Educational software.

Criminal justice systems.

Search rankings.

Recommendation engines.

Anywhere software influences decisions about people.

A famous example involved the COMPAS recidivism algorithm.

The system attempted to predict which defendants were most likely to reoffend.

The controversy was not just about prediction accuracy.

It was about how errors disproportionately impacted different groups.

That is the kind of thing developers cannot afford to casually ignore.

And before somebody says, “Well, I just build APIs,” remember this:

Every system eventually affects humans.

Even internal tooling.

Especially at scale.

Responsible engineering means testing systems beyond happy paths.

It means questioning outputs.

It means recognizing that data is not magically objective just because a machine processed it.

AI Can Absolutely Create Security Problems

Here is the uncomfortable truth.

AI-generated code can look polished while quietly introducing severe vulnerabilities.

Sometimes it uses outdated libraries.

Sometimes it invents insecure implementations.

Sometimes it recommends practices abandoned years ago.

And sometimes developers accept it all because the syntax looks clean.

Attackers do not care whether vulnerable code came from a human or a model.

A SQL injection vulnerability still works.

Weak authentication is still weak.

Exposed secrets are still exposed.

The industry already struggles with shipping secure software written by humans.

Now we are dramatically increasing development velocity without a proportional increase in review discipline.

That should concern everybody.

Every AI-generated output should be treated as untrusted until verified.

That means:

- Static analysis

- Security scanning

- Dependency validation

- Architecture review

- Penetration testing

- Edge case testing

- Human inspection

All of it.

Because AI does not magically remove engineering responsibility.

It increases the need for mature engineering practices.

Stop Pasting Sensitive Data Into Public Models

This should not need to be said again.

And yet.

People keep doing it.

Developers paste proprietary code into public AI tools.

Internal documents.

Customer information.

Production logs.

Database exports.

Confidential meeting notes.

Entire architectures.

Then, everybody acts shocked when organizations start locking down tools.

Samsung engineers famously leaked sensitive internal information into ChatGPT.

Multiple times.

That incident alone should have been a giant wake-up call.

If you paste data into an external model, you need to understand exactly where that data goes, how it is stored, whether it is retained, and whether it could influence future training.

Responsible AI usage requires discipline.

Sanitize information.

Use placeholders.

Strip secrets.

Never expose real customer data.

And for the love of all things holy, stop pasting production credentials into prompts.

Yes.

People have actually done this.

Transparency Matters More Than People Think

A weird thing happens when AI generates complex logic.

Developers sometimes skip documentation because they did not fully build the mental model themselves.

That becomes a disaster later.

If your team cannot explain how a system works, your team does not control the system.

The system controls you.

Every engineer should be able to explain AI-assisted code line by line.

Not vaguely.

Not conceptually.

Actually explain it.

If you cannot explain it, you should not merge it.

And honestly?

Your pull requests should say when AI significantly influenced the implementation.

Not because AI usage is bad.

Because transparency matters.

Future maintainers deserve context.

Debugging deserves context.

Security reviews deserve context.

There is no shame in AI-assisted development.

The shame comes from presenting generated code as your own work, even though no one understands how it works.

The Black Box Problem Is Real

Some AI-generated systems become black boxes incredibly fast.

Especially when developers repeatedly regenerate sections until something finally works.

That workflow creates systems nobody intentionally designed.

Think about that for a second.

The architecture becomes accidental.

Logic chains become inconsistent.

Patterns drift.

Abstractions stop making sense.

Then, eventually, the system encounters an edge case.

Now your team is reverse-engineering production behavior like digital archaeologists trying to understand an ancient civilization.

This is one reason senior engineering judgment matters more than ever.

AI can generate solutions.

It cannot reliably generate coherent long-term architecture without strong human guidance.

Systems need intentionality.

That still comes from people.

High-Risk Industries Cannot Afford Carelessness

Some sectors simply carry higher consequences.

Healthcare.

Finance.

Education.

Government.

Defense.

Infrastructure.

If your software influences patient outcomes, financial calculations, legal decisions, or public systems, responsible AI usage becomes even more critical.

You cannot shrug off mistakes with “the AI suggested it.”

Imagine a healthcare system that uses AI-generated logic and mishandles dosage calculations.

Or a financial platform introducing rounding issues into transactional systems.

Or educational software confidently teaching students incorrect information because hallucinations slipped through the validation process.

These are not hypothetical concerns.

The stakes become very real very quickly.

And this is where tech culture sometimes frustrates me.

Too many people still approach AI discussions with startup-energy optimism instead of operational realism.

“Move fast and break things” sounds fun until the broken thing is healthcare software.

Or financial reporting.

Or infrastructure.

Responsible engineering means understanding consequences.

Not just velocity.

Organizations Need Actual AI Standards

Telling developers to “use common sense” is not a strategy.

Organizations need real guidance.

Not performative policy PDFs nobody reads.

Actual operational standards.

Good engineering organizations should establish AI usage frameworks covering:

- What tools are approved

- What data can be shared

- What systems require additional oversight

- How AI-generated code is reviewed

- Documentation expectations

- Security requirements

- Ownership expectations

- Escalation procedures

And importantly:

These standards should be built with engineers.

Not dumped onto them by disconnected leadership teams who barely understand the technology.

The fastest way to create resentment is writing restrictive policies without involving the people doing the work.

Responsible AI adoption works best when teams collaborate on guardrails.

Because good engineers generally want to do things correctly.

They just need clarity.

Code Review Needs to Evolve

Traditional code review processes were designed around human mistakes.

AI introduces different problems.

Now reviewers need to watch for:

- Hallucinated dependencies

- Outdated security patterns

- Overengineered abstractions

- Hidden performance problems

- Inconsistent architectural decisions

- Phantom functions

- Generated code bloat

- Logic nobody can explain

That changes the review process.

Reviewers need more context.

Teams need better tooling.

Security scanning becomes more important.

Documentation becomes more important.

And honestly?

Teams probably need to slow down a little.

I know.

Nobody likes hearing that.

But speed without quality eventually leads to reduced productivity.

Every engineering team eventually pays its debt.

The only question is whether you pay gradually or in response to catastrophic production incidents.

AI Should Make You Better at Thinking

This is where I think a lot of people are using AI incorrectly.

The best usage patterns are interactive.

Exploratory.

Educational.

Not passive.

Instead of saying:

“Write the code for me.”

Try:

“Explain the tradeoffs between these approaches.”

Or:

“Generate pseudocode so I can implement it myself.”

Or:

“What edge cases am I missing?”

Or:

“Challenge this architecture decision.”

Those kinds of prompts sharpen thinking.

They force engagement.

They improve understanding.

They create better engineers.

AI becomes dangerous when developers stop thinking critically.

Because software engineering has never really been about syntax.

Syntax is the easy part.

Engineering is judgment.

Tradeoffs.

Systems thinking.

Communication.

Risk management.

Understanding people.

AI can assist with many things.

But judgment still matters.

A lot.

Testing Matters More Than Ever

AI dramatically increases output speed.

That means testing discipline has to increase, too.

You cannot accelerate code generation while keeping the same validation rigor.

That math does not work.

If AI helps you build software twice as fast, your review and testing strategies need to mature accordingly.

Especially around edge cases.

AI is usually decent at happy-path implementations.

But weird conditions?

Null handling.

Concurrency.

Massive datasets.

Race conditions.

Operational failures.

Unexpected state transitions.

Those are the areas where fragile systems get exposed.

Aggressive testing is not optional anymore.

It is survival.

Junior Developers Still Need Foundational Skills

This part matters a lot.

Especially for newer engineers.

AI can absolutely accelerate learning.

But it can also stunt development if used incorrectly.

If every difficult problem immediately gets outsourced to AI, foundational problem-solving muscles never develop.

And those foundational skills matter.

Data structures.

Algorithms.

Debugging.

Architecture.

Reading documentation.

Understanding systems.

Learning how to think through problems.

The strongest engineers are not the ones who memorize syntax.

They are the ones who can reason through uncertainty.

AI should help build those abilities.

Not replace them.

Honestly, I think one of the healthiest habits early-career developers can build is alternating between AI-assisted workflows and fully manual workflows.

Sometimes, force yourself to solve things independently.

Struggle a little.

That discomfort is where growth happens.

Metrics Actually Matter

A lot of organizations adopted AI because everyone else was.

That is not a strategy.

That is fear.

If teams are going to integrate AI into engineering workflows, leadership needs to measure outcomes honestly.

Did delivery improve?

Did incident frequency increase?

Did code quality decline?

Did onboarding improve?

Did recovery times worsen?

Did technical debt spike?

Because velocity metrics alone are dangerous.

Shipping more code faster means nothing if reliability collapses six months later.

And some organizations are absolutely heading toward that cliff right now.

The real value of AI is not measured in lines of code.

It is measured in sustainable engineering effectiveness.

That is much harder to quantify.

But it matters infinitely more.

Responsible AI Is Bigger Than Code

This conversation extends beyond software engineering.

AI impacts hiring.

Healthcare.

Education.

Creativity.

Journalism.

Finance.

Public policy.

Human behavior.

And eventually society itself.

Developers cannot hide behind “I just build the systems.”

That mindset stopped working a long time ago.

Technology shapes lives.

Sometimes dramatically.

That means ethical thinking cannot be separated from engineering anymore.

And no, this does not mean developers suddenly need philosophy degrees.

It just means responsibility matters.

Consequences matter.

Intentionality matters.

You do not need perfection.

You need awareness.

You need discipline.

You need humility.

AI Is Not Going Away

Some people are still trying to fight AI as if it were a temporary trend.

It is not.

The tooling will only improve.

The workflows will evolve.

Organizations will continue integrating it.

And honestly?

Many developers who learn to use AI responsibly will become dramatically more effective.

That is reality.

But the future does not belong to people who blindly automate everything.

And it does not belong to those who refuse to adapt.

It belongs to engineers who can combine human judgment with powerful tooling.

That combination is incredibly valuable.

Because AI still lacks something important.

Wisdom.

Context.

Ethics.

Taste.

Responsibility.

Long-term thinking.

Human understanding.

Those things still matter.

Probably more than ever.

You Are Still the Pilot

There is a phrase I keep coming back to.

AI is the copilot.

You are still the pilot.

That distinction matters.

A copilot can help navigate.

A copilot can assist with execution.

A copilot can reduce workload.

But the pilot remains responsible for the flight.

Software engineering works the same way.

Use AI.

Experiment with it.

Learn it deeply.

Leverage it aggressively.

But do not surrender your judgment to it.

Do not outsource your thinking.

Do not stop learning fundamentals.

Do not ignore security.

Do not abandon craftsmanship.

And definitely do not mistake generated output for engineering wisdom.

Responsible AI use is not really about the AI.

It is about the humans using it.

The developers.

The leaders.

The organizations.

The choices.

The accountability.

That is the real story.

The developers who thrive in this next era will not be the ones who type prompts the fastest.

They will be the ones who understand when not to trust the machine.

And honestly?

That has always been the job.

Good engineering was never just about writing code.

It was always about judgment, accountability, and thinking clearly under pressure.

That part has not changed.